拡散モデルを使用して、いかに現実的なコンテンツを作成し、デザイン、音楽、映画などの分野をさまざまなアプリケーションで再定義できるかをご紹介します。

拡散モデルを使用して、いかに現実的なコンテンツを作成し、デザイン、音楽、映画などの分野をさまざまなアプリケーションで再定義できるかをご紹介します。

MidjourneyやSoraのような生成AIツールを使ってコンテンツを作成することがますます一般的になっており、これらのツールの中身に関心が高まっています。実際、最近の調査によると、94%の人が生成AIを扱うための新しいスキルを学ぶ意欲があることがわかっています。生成AIモデルの仕組みを理解することで、これらのツールをより効果的に使いこなし、最大限に活用することができます。

MidjourneyやSoraのようなツールの中核をなすのは、高度な拡散モデルです。これは、画像、動画、テキスト、オーディオなど、さまざまなアプリケーション向けのコンテンツを生成できる生成AIモデルです。例えば、拡散モデルは、TikTokやYouTube Shortsのようなソーシャルメディアプラットフォーム向けの短いマーケティング動画を制作するのに最適です。この記事では、拡散モデルの仕組みと、その活用分野について解説します。それでは、始めましょう!

物理学において、拡散とは、分子が高濃度領域から低濃度領域へ広がるプロセスのことです。拡散の概念は、ブラウン運動と密接に関連しています。ブラウン運動では、粒子が流体中の分子と衝突してランダムに動き、時間とともに徐々に広がっていきます。

これらの概念は、生成AIにおける拡散モデルの開発にインスピレーションを与えました。拡散モデルは、データに徐々にノイズを加え、そのプロセスを逆転させて、テキスト、画像、サウンドなどの新しい高品質なデータを生成することを学習します。これは、物理学における逆拡散の概念と似ています。理論的には、拡散を逆方向に追跡することで、粒子を元の状態に戻すことができます。同様に、拡散モデルは、加えられたノイズを逆転させて、ノイズの多い入力からリアルな新しいデータを作成することを学習します。

一般的に、拡散モデルのアーキテクチャは、主に2つのステップで構成されています。まず、モデルはデータセットに徐々にノイズを加えることを学習します。次に、このプロセスを逆転させ、データを元の状態に戻すように訓練されます。この仕組みについて詳しく見ていきましょう。

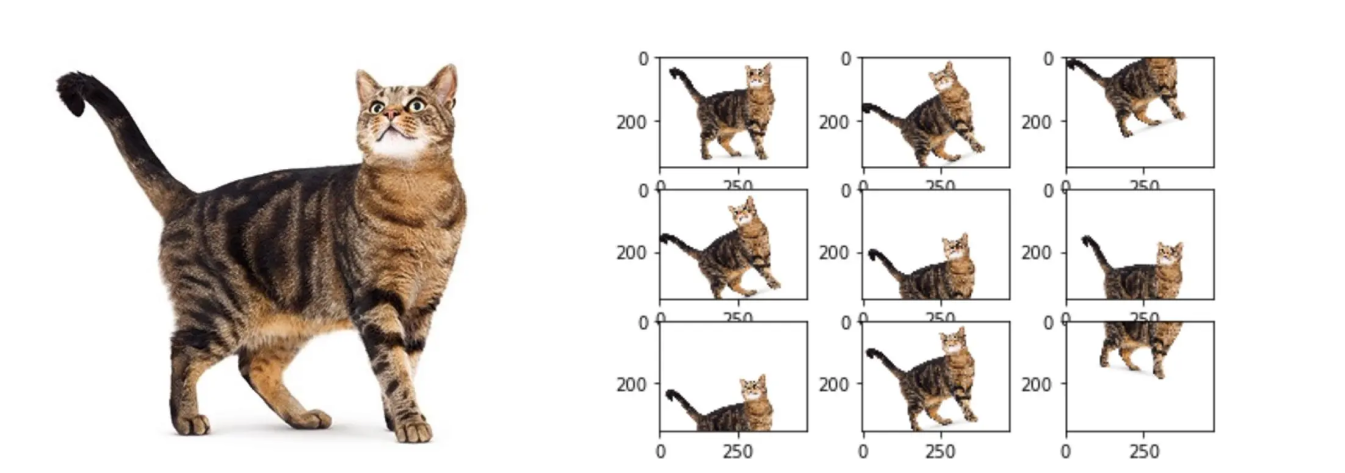

拡散モデルの中核に飛び込む前に、モデルの学習に使用するデータはすべて前処理する必要があることを覚えておくことが重要です。例えば、画像を生成するために拡散モデルをトレーニングする場合、画像のトレーニングデータセットを最初にクリーンアップする必要があります。画像データの前処理には、結果に影響を与える可能性のある外れ値の削除、すべての画像が同じスケールになるようにピクセル値を正規化、より多くの多様性をもたらすためのデータ拡張などが含まれます。データの前処理ステップは、トレーニングデータの品質を保証するのに役立ち、これは拡散モデルだけでなく、あらゆるAIモデルに当てはまります。

データの前処理後、次のステップは順方向拡散プロセスです。ここでは、画像を生成するための拡散モデルの学習に焦点を当てます。このプロセスは、ガウス分布のような単純な分布からサンプリングすることから始まります。言い換えれば、何らかのランダムノイズが選択されます。下の図に示すように、モデルは一連のステップで画像を徐々に変換します。画像は最初は鮮明ですが、各ステップを経るにつれてノイズがますます増加し、最終的にはほとんど完全なノイズになります。

各ステップは前のステップに基づいており、マルコフ連鎖を使用して、ノイズが制御された段階的な方法で追加されます。マルコフ連鎖とは、次の状態の確率が現在の状態のみに依存する数学的モデルです。これは、現在の状態に基づいて将来の結果を予測するために使用されます。各ステップでデータに複雑さが加わるため、元の画像データ分布の最も複雑なパターンと詳細を捉えることができます。ガウスノイズの追加は、拡散が進むにつれて多様でリアルなサンプルも生成します。

逆拡散プロセスは、順方向拡散プロセスがサンプルをノイズが多く複雑な状態に変換した後に開始されます。一連の逆変換を使用して、ノイズの多いサンプルを元の状態に徐々に戻します。ノイズを追加するプロセスを逆にするステップは、逆マルコフ連鎖によって導かれます。

.png)

逆プロセスの間、拡散モデルは、ランダムなノイズサンプルから始めて、それを徐々に洗練して鮮明で詳細な出力にすることで、新しいデータを生成することを学習します。生成されたデータは、最終的に元のデータセットに非常によく似たものになります。この機能こそが、拡散モデルを画像合成、データ補完、ノイズ除去などのタスクに最適にする理由です。次のセクションでは、拡散モデルのより多くの応用例を探ります。

段階的な拡散プロセスにより、拡散モデルは、データの高次元性に圧倒されることなく、複雑なデータ分布を効率的に生成できます。拡散モデルが優れているいくつかのアプリケーションを見てみましょう。

拡散モデルを使用して、グラフィカルなビジュアルコンテンツを迅速に生成できます。人間のデザイナーやアーティストは、入力スケッチ、レイアウト、または必要なものの簡単なアイデアを提供することができ、モデルはこれらのアイデアを実現できます。これにより、設計プロセス全体をスピードアップし、最初のコンセプトから最終製品まで幅広い新しい可能性を提供し、人間のデザイナーにとって貴重な時間を大幅に節約できます。

拡散モデルは、非常にユニークなサウンドスケープや音楽ノートを生成するためにも応用できます。ミュージシャンやアーティストが聴覚体験を視覚化し、創造するための新しい方法を提供します。以下に、サウンドおよび音楽制作の分野における拡散モデルのユースケースをいくつか紹介します。

拡散モデルのもう1つの興味深いユースケースは、映画やアニメーションクリップの作成です。これらを使用して、キャラクター、リアルな背景、さらにはシーン内の動的な要素を生成できます。拡散モデルの使用は、制作会社にとって大きな利点となり得ます。ワークフロー全体が効率化され、視覚的なストーリーテリングにおける実験と創造性が促進されます。これらのモデルを使用して作成されたクリップの中には、実際のアニメーションまたはフィルムクリップに匹敵するものもあります。これらのモデルを使用して、映画全体を作成することも可能です。

拡散モデルの応用例について学んだので、実際に試すことができる一般的な拡散モデルをいくつか見てみましょう。

拡散モデルは多くの業界でメリットをもたらしますが、それに伴う課題も念頭に置いておく必要があります。課題の1つは、トレーニングプロセスが非常にリソースを消費することです。ハードウェアアクセラレーションの進歩は役立ちますが、コストがかかる可能性があります。もう1つの問題は、拡散モデルが見えないデータに一般化する能力が限られていることです。特定のドメインに適応させるには、多くの微調整または再トレーニングが必要になる場合があります。

これらのモデルを現実世界のタスクに統合するには、独自の課題が伴います。AIが生成するものが、人間が意図するものと実際に一致することが重要です。また、これらのモデルがトレーニングに使用されたデータからバイアスを拾い上げて反映するリスクなど、倫理的な懸念もあります。それに加えて、ユーザーの期待を管理し、フィードバックに基づいてモデルを常に改良することは、これらのツールを可能な限り効果的かつ信頼性の高いものにするための継続的な取り組みとなります。

拡散モデルは、生成AIにおける魅力的な概念であり、さまざまな分野で高品質な画像、動画、サウンドの生成を支援します。計算需要や倫理的な懸念など、実装上の課題もありますが、AIコミュニティは常にその効率と影響力を向上させるために取り組んでいます。拡散モデルは進化を続け、映画、音楽制作、デジタルコンテンツ制作などの業界を変革する準備が整っています。

一緒に学び、探求しましょう!私たちのAIへの貢献については、GitHubリポジトリをご覧ください。最先端のAI技術で、製造業やヘルスケアなどの業界をどのように再定義しているかをご覧ください。